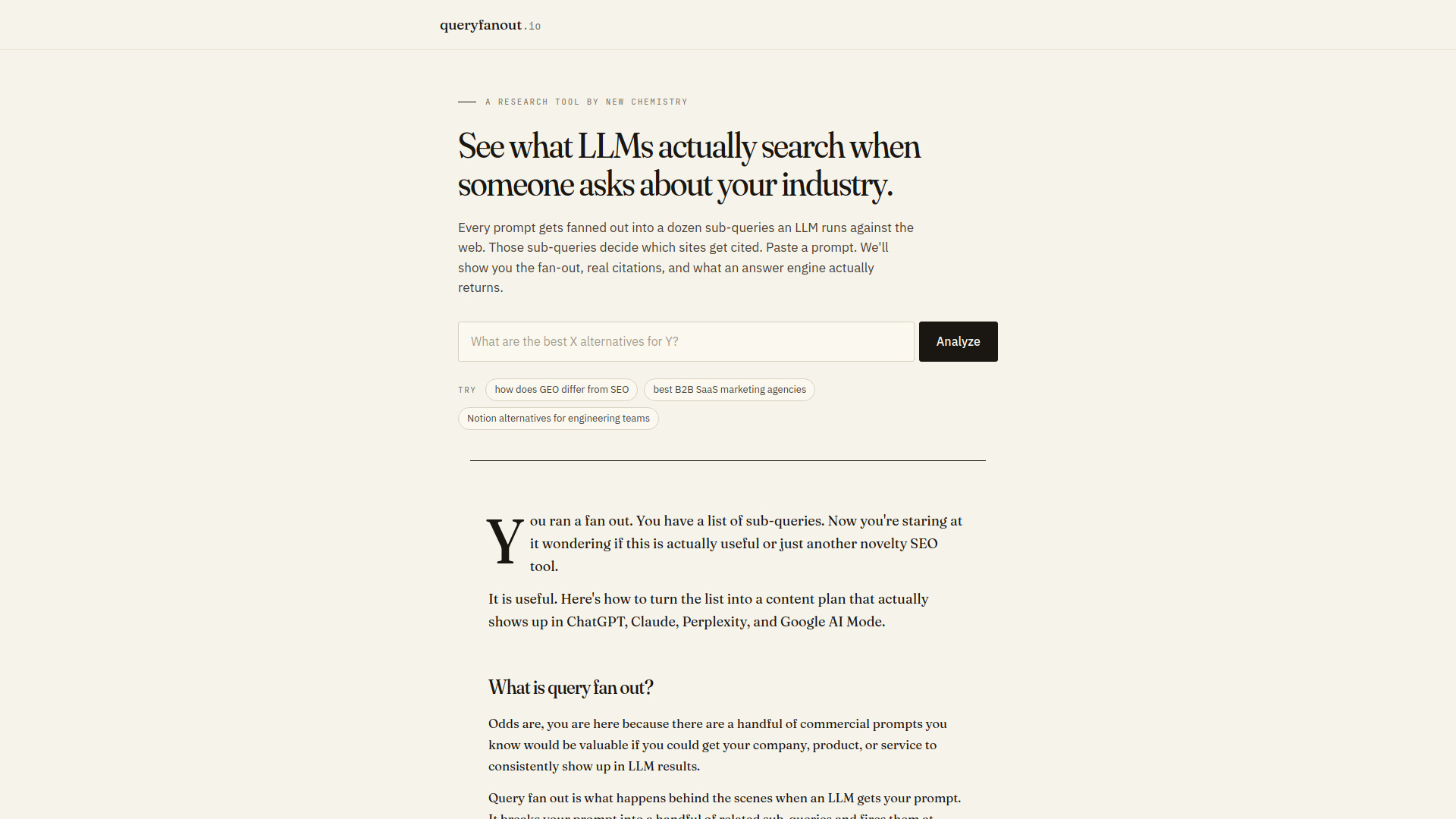

What is Query Fan-Out Optimizer

Query Fan-Out Optimizer is a tool that reveals the hidden sub-queries Large Language Models (LLMs) generate when processing a user's prompt. It uncovers the multiple searches an LLM performs to find and cite web sources, providing insight into how AI search results are constructed.

How to use Query Fan-Out Optimizer

- Enter a prompt into the "Analyze" tool.

- The tool will display a preview of the fan-out sub-queries, along with summary statistics.

- To see the full list of sub-queries, including those marked "Worth Building" or "Watch" with priority scoring, and the cited sources, users are prompted to enter their email address.

- Upon email submission, the full report is unlocked, revealing all sub-queries, priority scoring, cited sources, and the canonical answer generated by the LLM.

Features of Query Fan-Out Optimizer

- Sub-query Identification: Uncovers the specific, hidden queries LLMs use.

- Citation Analysis: Shows the web sources LLMs cite for their answers.

- Categorization: Classifies sub-queries into types like related, reformulation, comparative, entity expansion, recent, and implicit.

- Intent Analysis: Identifies the user's intent behind each sub-query.

- Enrichment Data: Provides metrics such as search volume, keyword difficulty, and commercial value.

- Verdict Scoring: Assigns a verdict (Worth Building, Watch, Skip) to each sub-query based on its potential value.

- Canonical Answer Display: Shows the synthesized answer the LLM generates.

- Real-time Date Injection: Incorporates the current date into prompts to reflect current search conditions.

Use Cases of Query Fan-Out Optimizer

- SEO Strategy: Identify and optimize for the specific sub-queries LLMs use, improving visibility in AI-generated search results.

- Content Planning: Develop content that directly addresses LLM sub-queries to increase citation opportunities.

- Competitive Analysis: Understand how competitors are being cited and identify gaps in your own content strategy.

- Understanding LLM Behavior: Gain insight into the internal search processes of AI models like ChatGPT, Claude, and Perplexity.

FAQ

How is this different from regular keyword research?

Keyword research focuses on what humans type into search engines. Query Fan-Out Optimizer reveals what LLMs type into search engines after receiving a human's prompt, offering a different layer of leverage for visibility.

Do all LLMs fan out the same way?

No, each LLM has its own reasoning and data, leading to different fan-out patterns for the same prompt across models like ChatGPT, Claude, and Perplexity. However, the general patterns of sub-query generation hold true.

How often should I re-run a fan out?

Re-run a fan-out whenever the context of your prompt changes, such as with new market information, product launches, or a new year, as the tool incorporates the current date.