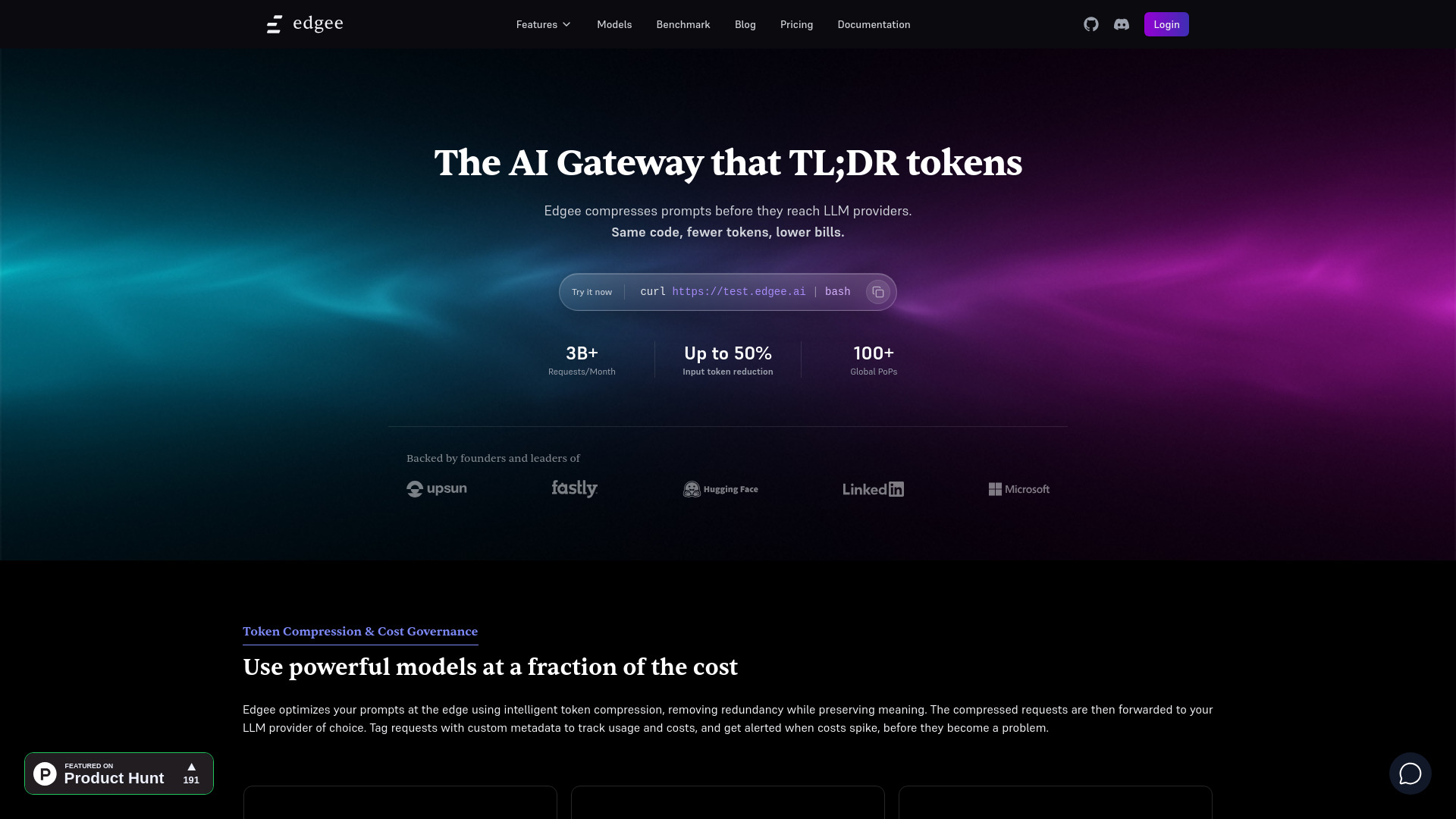

What is Edgee

Edgee is an AI gateway that reduces LLM costs by up to 50% through edge-native token compression. It provides an OpenAI-compatible API to connect to over 200 models, optimizing LLM usage by compressing prompts at the edge, routing to cost-efficient models, and applying intelligent policies.

How to use Edgee

- Integrate with your application: Call Edgee using a standard OpenAI-compatible API.

- Utilize Edgee's SDKs: Use provided SDKs for TypeScript, Python, Go, or Rust.

- Deploy Edge Tools: Run shared or private tools at the edge.

- Manage Keys: Use Edgee's keys or bring your own provider keys.

- Monitor and Govern: Track usage, costs, and set up alerts for cost spikes.

Features of Edgee

- Token Compression: Reduces prompt size at the edge to lower costs and latency.

- Cost Reduction: Achieves up to 50% cost savings.

- Universal Compatibility: Works with over 200 models from providers like OpenAI, Anthropic, Gemini, xAI, and Mistral.

- Intelligent Routing: Routes requests to cost-efficient models.

- Edge Tools: Deploy and run tools at the edge for faster processing.

- BYOK (Bring Your Own Keys): Option to use your own provider API keys for direct billing and custom models.

- Observability: Monitor latency, errors, usage, and cost per model.

- Cost Governance: Tag requests with custom metadata (e.g., feature, team, project) for usage tracking and set up cost alerts.

- Semantic Preservation: Compresses prompts while maintaining context and intent.

- OpenAI-Compatible API: Provides a unified API for various LLM providers.

- Private Models: Deploy serverless open-source LLMs at the edge.

Use Cases of Edgee

- LLM Cost Optimization: Significantly reduce expenses associated with using large language models.

- Performance Improvement: Lower latency by processing requests closer to users and providers.

- AI Feature Development: Ship AI features faster with a managed gateway and tools.

- Data Privacy: Enhance privacy by processing data at the edge.

- A/B Testing and Personalization: Modify HTML or responses dynamically at the edge.

- Security: Implement protection layers like bot detection and rate limiting.

- Consent Management: Integrate CMPs and enforce consent upstream.

- Identity Management: Handle user identification with first-party cookies and universal IDs.

Pricing

Edgee offers an open-source platform with a free tier and paid plans for enterprise features. Specific pricing details are not provided in the content.

FAQ

- What is Edgee? Edgee is an edge-native AI gateway that optimizes LLM costs through token compression, intelligent routing, and edge processing. It provides one OpenAI-compatible API to connect to 200+ models while reducing costs by up to 50%.

- How does Edgee work? Your app calls Edgee with a standard OpenAI-compatible API. Edgee compresses prompts at the edge to reduce token usage, routes to cost-efficient models, and applies intelligent policies before forwarding to LLM providers—all while tracking real-time cost savings.

- Can I use my own provider API keys? Yes. You can use Edgee’s unified access with a single Edgee API key, or bring your own provider keys for direct billing and custom models.

- What do I get with Edgee? Up to 50% cost reduction through token compression, one OpenAI-compatible API for 200+ models, intelligent cost-aware routing, real-time savings tracking, and edge-level capabilities—with instant ROI from day one.